Can you access the bits that represent an IEEE754 single precision float in a C++14 constant expression (constexpr)?

(Why would you want to do that? Maybe you want to run a fast inverse square root at compile time. Or maybe you want to do something that is actually useful. I wanted to know if it could be done.)

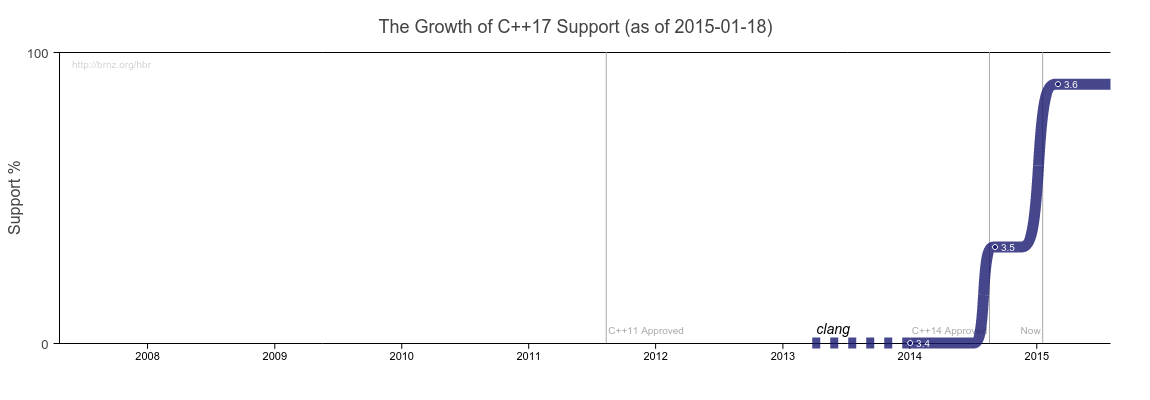

For context: this article is based on experiences using gcc-5.3.0 and clang-3.7.1 with -std=c++14 -march=native on a Sandy Bridge Intel i7. Where I reference sections from the C++ standard, I’m referring to the November 2014 draft.

Before going further, I’ll quote 5.20.6 from the standard:

Since this International Standard imposes no restrictions on the accuracy of floating-point operations, it is unspecified whether the evaluation of a floating-point expression during translation yields the same result as the evaluation of the same expression (or the same operations on the same values) during program execution.88

88) Nonetheless, implementations are encouraged to provide consistent results, irrespective of whether the evaluation was performed during translation and/or during program execution.

In this post, I document things that worked (and didn’t work) for me. You may have a different experience.

Methods of conversion that won’t work

(Error text from g++-5.3.0)

You can’t access the bits of a float via a typecast pointer [which is undefined behavior, and covered by 5.20.2.5]:

constexpr uint32_t bits_cast(float f)

{

return *(uint32_t*)&f; // [2]

}

error:

accessing value of 'f' through a 'uint32_t {aka unsigned int}' glvalue

in a constant expression

You can’t convert it via a reinterpret cast [5.20.2.13]

constexpr uint32_t bits_reinterpret_cast(float f)

{

const unsigned char* cf = reinterpret_cast<const unsigned char*>(&f);

// endianness notwithstanding

return (cf[3] << 24) | (cf[2] << 16) | (cf[1] << 8) | cf[0];

}

error: '*(cf + 3u)' is not a constant expression

(gcc reports an error with the memory access, but does not object to the reinterpret_cast. clang produces a specific error for the cast.)

You can’t convert it through a union [gcc, for example, permits this for non-constant expressions, but the standard forbids it in 5.20.2.8]:

constexpr uint32_t bits_union(float f)

{

union Convert {

uint32_t u;

float f;

constexpr Convert(float f_) : f(f_) {}

};

return Convert(f).u;

}

error: accessing 'bits_union(float)::Convert::u' member instead of initialized 'bits_union(float)::Convert::f' member in constant expression

You can’t use memcpy() [5.20.2.2]:

constexpr uint32_t bits_memcpy(float f)

{

uint32_t u = 0;

memcpy(&u, &f, sizeof f);

return u;

}

error: 'memcpy(((void*)(&u)), ((const void*)(&f)), 4ul)' is not a constant expression

And you can’t define a constexpr memcpy()-like function that is capable of the task [5.20.2.11]:

constexpr void* memcpy(void* dest, const void* src, size_t n)

{

char* d = (char*)dest;

const char* s = (const char*)src;

while(n-- > 0)

*d++ = *s++;

return dest;

}

constexpr uint32_t bits_memcpy(float f)

{

uint32_t u = 0;

memcpy(&u, &f, sizeof f);

return u;

}

error: accessing value of 'u' through a 'char' glvalue in a constant expression

So what can you do?

Floating point operations in constant expressions

For constexpr float f = 2.0f, g = 2.0f the following operations are available [as they are not ruled out by anything I can see in 5.20]:

- Comparison of floating point values e.g.

static_assert(f == g, "not equal"); - Floating point arithmetic operations e.g.

static_assert(f * 2.0f == 4.0f, "arithmetic failed"); - Casts from float to integral value, often with well-defined semantics e.g.

constexpr int i = (int)2.0f; static_assert(i == 2, "conversion failed");

So I wrote a function (uint32_t bits(float)) that will return the binary representation of an IEEE754 single precision float. The full function is at the end of this post. I’ll go through the various steps required to produce (my best approximation of) the desired result.

1. Zero

When bits() is passed the value zero, we want this behavior:

static_assert(bits(0.0f) == 0x00000000);

And we can have it:

if (f == 0.0f)

return 0;

Nothing difficult about that.

2. Negative zero

In IEEE754 land, negative zero is a thing. Ideally, we’d like this behavior:

static_assert(bits(-0.0f) == 0x80000000)

But the check for zero also matches negative zero. Negative zero is not something that the C++ standard has anything to say about, given that IEEE754 is an implementation choice [3.9.1.8: “The value representation of floating-point types is implementation defined”]. My compilers treat negative zero the same as zero for all comparisons and arithmetic operations. As such, bits() returns the wrong value when considering negative zero, returning 0x00000000 rather than the desired 0x80000000.

I did look into other methods for detecting negative zero in C++, without finding something that would work in a constant expression. I have seen divide by zero used as a way to detect negative zero (resulting in ±infinity, depending on the sign of the zero), but that doesn’t compile in a constant expression:

constexpr float r = 1.0f / -0.0f;

error: '(1.0e+0f / -0.0f)' is not a constant expression

and divide by zero is explicitly named as undefined behavior in 5.6.4, and so by 5.20.2.5 is unusable in a constant expression.

Result: negative zero becomes positive zero.

3. Infinity

We want this:

static_assert(bits(INFINITY) == 0x7f800000);

And this:

else if (f == INFINITY)

return 0x7f800000;

works as expected.

4. Negative Infinity

Same idea, different sign:

static_assert(bits(-INFINITY) == 0xff800000);

else if (f == -INFINITY)

return 0xff800000;

Also works.

5. NaNs

There’s no way to generate arbitrary NaN constants in a constant expression that I can see (not least because casting bits to floats isn’t possible in a constant expression, either), so it seems impossible to get this right in general.

In practice, maybe this is good enough:

static_assert(bits(NAN) == 0x7fc00000);

NaN values can be anywhere in the range of 0x7f800001 -- 0x7fffffff and 0xff800001 -- 0xffffffff. I have no idea as to the specific values that are seen in practice, nor what they mean. 0x7fc00000 shows up in /usr/include/bits/nan.h on the system I’m using to write this, so — right or wrong — I’ve chosen that as the reference value.

It is possible to detect a NaN value in a constant expression, but not its payload. (At least that I’ve been able to find). So there’s this:

else if (f != f) // NaN

return 0x7fc00000; // This is my NaN...

Which means that of the 2*(223-1) possible NaNs, one will be handled correctly (in this case, 0x7fc00000). For the other 16,777,213 values, the wrong value will be returned (in this case, 0x7fc00000).

So… partial success? NaNs are correctly detected, but the bits for only one NaN value will be returned correctly.

(On the other hand, the probability that it will ever matter could be stored as a denormalized float)

6. Normalized Values

// pseudo-code

static_assert(bits({ 0x1p-126f, ..., 0x1.ffff7p127})

== { 0x00800000, ..., 0x7f7fffff});

static_assert(bits({ -0x1p-126f, ..., -0x1.ffff7p127})

== { 0x80800000, ..., 0xff7fffff});

[That 0x1pnnnf format happens to be a convenient way to represent exact values that can be stored as binary floating point numbers]

It is possible to detect and correctly construct bits for every normalized value. It does requires a little care to avoid truncation and undefined behavior. I wrote a few different implementations — the one that I describe here requires relatively little code, and doesn’t perform terribly [0].

The first step is to find and clear the sign bit. This simplifies subsequent steps.

bool sign = f < 0.0f; float abs_f = sign ? -f : f;

Now we have abs_f — it’s positive, non-zero, non-infinite, and not a NaN.

What happens when a float is cast to an integral type?

uint64_t i = (uint64_t)f;

The value of f will be stored in i, according to the following rules:

- The value will be rounded towards zero which, for positive values, means truncation of any fractional part.

- If the value in

fis too large to be represented as auint64_t(i.e.f> 264-1) the result is undefined.

If truncation takes place, data is lost. If the number is too large, the result is (probably) meaningless.

For our conversion function, if we can scale abs_f into a range where it is not larger than (264-1), and it has no fractional part, we have access to an exact representation of the bits that make up the float. We just need to keep track of the amount of scaling being done.

Single precision IEEE 754 floating point numbers have, at most, (23+1) bits of precision (23 in the significand, 1 implicit). This means that we can scale down large numbers and scale up small numbers into the required range.

Multiplying by powers of two change only the exponent of the float, and leave the significand unmodified. As such, we can arbitrarily scale a float by a power of two and — so long as we don’t over- or under-flow the float — we will not lose any of the bits in the significand.

For the sake of simplicity (believe it or not [1]), my approach is to scale abs_f in steps of 241 so that (abs_f ≥ 287) like so:

int exponent = 254;

while(abs_f < 0x1p87f)

{

abs_f *= 0x1p41f;

exponent -= 41;

}

If abs_f ≥ 287, the least significant bit of abs_f, if set, is 2(87-23)==264.

Next, abs_f is scaled back down by 264 (which adds no fractional part as the least significant bit is 264) and converted to an unsigned 64 bit integer.

uint64_t a = (uint64_t)(abs_f * 0x1p-64f);

All of the bits of abs_f are now present in a, without overflow or truncation. All that is needed now is to determine where they are:

int lz = count_leading_zeroes(a);

adjust the exponent accordingly:

exponent -= lz;

and construct the result:

uint32_t significand = (a << (lz + 1)) >> (64 - 23); // [3] return (sign << 31) | (exponent << 23) | significand;

With this, we have correct results for every normalized float.

7. Denormalized Values

// pseudo-code

static_assert(bits({ 0x1.0p-149f, ..., 0x1.ffff7p-127f})

== { 0x00000001, ..., 0x007fffff});

static_assert(bits({ -0x1.0p-149f, ..., -0x1.ffff7p-127f})

== { 0x80000001, ..., 0x807fffff});

The final detail is denormalized values. Handling of normalized values as presented so far fails because denormals will have additional leading zeroes. They are fairly easy to account for:

if (exponent <= 0)

{

exponent = 0;

lz = 8 - 1;

}

To attempt to demystify that lz = 8 - 1 a little: there are 8 leading bits that aren’t part of the significand of a denormalized single precision float after the repeated 2-41 scaling that has taken place. There is also no leading 1 bit that is present in all normalized numbers (which is accounted for in the calculation of significand above as (lz + 1)). So the leading zero count (lz) is set to account for the 8 bits of offset to the start of the denormalized significand, minus the one that the subsequent calculation assumes it needs to skip over.

And that’s it. All the possible values of a float are accounted for.

(Side note: If you’re compiling with -ffast-math, passing denormalized numbers to bits() will return invalid results. That’s -ffast-math for you. With gcc or clang, you could add an #ifdef __FAST_MATH__ around the test for negative exponent.)

Conclusion

You can indeed obtain the bit representation of a floating point number at compile time. Mostly. Negative zero is wrong, NaNs are detected but otherwise not accurately converted.

Enjoy your compile-time bit-twiddling!

The whole deal:

// Based on code from

// https://graphics.stanford.edu/~seander/bithacks.html

constexpr int count_leading_zeroes(uint64_t v)

{

constexpr char bit_position[64] = {

0, 1, 2, 7, 3, 13, 8, 19, 4, 25, 14, 28, 9, 34, 20, 40,

5, 17, 26, 38, 15, 46, 29, 48, 10, 31, 35, 54, 21, 50, 41, 57,

63, 6, 12, 18, 24, 27, 33, 39, 16, 37, 45, 47, 30, 53, 49, 56,

62, 11, 23, 32, 36, 44, 52, 55, 61, 22, 43, 51, 60, 42, 59, 58 };

v |= v >> 1; // first round down to one less than a power of 2

v |= v >> 2;

v |= v >> 4;

v |= v >> 8;

v |= v >> 16;

v |= v >> 32;

v = (v >> 1) + 1;

return 63 - bit_position[(v * 0x0218a392cd3d5dbf)>>58]; // [3]

}

constexpr uint32_t bits(float f)

{

if (f == 0.0f)

return 0; // also matches -0.0f and gives wrong result

else if (f == INFINITY)

return 0x7f800000;

else if (f == -INFINITY)

return 0xff800000;

else if (f != f) // NaN

return 0x7fc00000; // This is my NaN...

bool sign = f < 0.0f;

float abs_f = sign ? -f : f;

int exponent = 254;

while(abs_f < 0x1p87f)

{

abs_f *= 0x1p41f;

exponent -= 41;

}

uint64_t a = (uint64_t)(abs_f * 0x1p-64f);

int lz = count_leading_zeroes(a);

exponent -= lz;

if (exponent <= 0)

{

exponent = 0;

lz = 8 - 1;

}

uint32_t significand = (a << (lz + 1)) >> (64 - 23); // [3]

return (sign << 31) | (exponent << 23) | significand;

}

[0] Why does runtime performance matter? Because that’s how I tested the conversion function while implementing it. I was applying Bruce Dawson’s advice for testing floats and the quicker I found out that I’d broken the conversion the better. For the implementation described in this post, it takes about 97 seconds to test all four billion float values on my laptop — half that time if I wasn’t testing negative numbers (which are unlikely to cause problems due to the way I handle the sign bit). The implementation I’ve described in this post is not the fastest solution to the problem, but it is relatively compact, and well behaved in the face of -ffast-math.

Admission buried in a footnote: I have not validated correct behavior of this code for every floating point number in actual compile-time constant expressions. Compile-time evaluation of four billion invocations of bits() takes more time than I’ve been willing to invest so far.

[1] It is conceptually simpler to multiply abs_f by two (or one half) until the result is exactly positioned so that no leading zero count is required after the cast — at least, that was what I did in my first attempt. The approach described here was found to be significantly faster. I have no doubt that better-performing constant-expression-friendly approaches exist.

[2] Update 2016-03-28: Thanks to satbyy for pointing out the missing ampersand — it was lost sometime after copying the code into the article.

[3] Update 2016-03-28: Thanks to louiswins for pointing out additional code errors.